Context

Overhauling Mercedes-Benz’s ad analytics platform

The Media Decision Engine is Mercedes-Benz’s paid media analytics platform, used to track, measure, and optimise advertising performance across all paid media channels. The platform had been built by OMD (opens in new tab) (one of Interbrand’s sister agencies) and grown in capability over the years without dedicated product design input; Interbrand was brought in to overhaul it.

- Vehicles sold worldwide, 2025

- 2.16m

- Mercedes-Benz Group revenue, 2025

- €132.2bn

- Invented the automobile

- 1886

- Among the world's most valuable brands

- Top 10

- Media channels optimised

- 7

- Media analytics tools, regrouped into three stages

- 15

Sources: Mercedes-Benz Group FY2025 results and Interbrand Best Global Brands 2025

Problem

A powerful platform obscured by its interface

The Media Decision Engine was a capable platform held back by its interface.

- Information architecture. The platform never properly explained itself. Users struggled to understand what it was for and what each individual tool did.

- Inconsistency across tools. Each tool had been built in isolation. Flows, interaction patterns, and data visualisation all varied from tool to tool, forcing users to re-learn the interface every time they switched tool.

- Exporting data. Export formats were limited, and there was no way to batch exports across multiple tools, forcing users to repeat the same task tool by tool.

Research

Understanding the platform

Niq Curry led user research and developed personas that anchored the redesign. Separately, we interviewed OMD, who had originally built the platform, to understand the constraints that shaped it. I then co-facilitated a day-long London workshop with Mercedes-Benz’s team, who flew in from Stuttgart, walking them through personas, user needs, and pain points before opening up to ideation.

Design sprints

Three sprints in series

The three problem areas were tackled as separate design sprints, run in series so each built on the last.

Information architecture

Grouping tools around the user’s process

The original platform was organised around the tools themselves. The redesign organised them around the user’s process.

Tools were regrouped into three stages that mirrored how users actually moved through a media planning decision: looking for market opportunities in existing data; determining the audience and focus of messaging; then setting the overall budget and allocating it across media channels.

Within each stage, tools were grouped on a page, in the order users would naturally work through them. Putting related tools next to each other made the relationships between them self-evident, so users no longer had to reconstruct the logic of the platform.

The Market Opportunities stage of the IA, showing tools laid out in the order users move through them.

Configuring parameters

Global settings, adjustable anywhere

Users had been re-entering the same data into tool after tool. The redesign separated parameters that needed to be shared across tools from those that were tool-specific, so global settings could be configured once.

- Global vs. local parameters. I scoped every parameter as either global (applied across multiple tools) or local (relevant to a single tool). Globals were consolidated into a single Scenario Settings overlay, accessible from anywhere on the platform. Local parameters stayed within their tool, configurable in place.

- Pre-populated visualisations. With globals managed centrally, graphs and other visualisations could update live as users adjusted scenarios, showing them what a parameter did rather than explaining it.

- Microcopy rewritten platform-wide. All in-product copy was rewritten to be short and plain, lowering cognitive load and making the relationships between tools more intuitive.

- Exports unified across tools. Users could export from any tool using the same flow, and select multiple tools for batch export, removing the repetition built into the old platform.

The result was a platform where content throughout the site reacted to global scenario changes, rather than a collection of discrete tools.

The Scenario Settings overlay. Adjusting any global parameter updates visualisations across every tool live.

Channel Optimisation: spend versus impact across paid media channels, with budget allocation in the right rail.

Onboarding

A guided introduction for less experienced users

The first two sprints had done a lot of the onboarding work. A clearer information architecture and live, demonstrative scenarios meant the platform now explained itself in ways the old one couldn’t. The onboarding sprint focused on the gap that remained, particularly for users less familiar with media analytics.

- A short guided tour on first use. A brief upfront orientation to the platform’s structure and the logic behind it.

- Tooltips and nudges, used sparingly. Tied to specific moments where users were most likely to need a prompt, with copy kept short.

- Copy pitched to the audience. Onboarding copy assumed a working knowledge of media analytics and avoided the explanatory hand-holding that often makes onboarding feel patronising to expert users.

The first-use orientation, kept short because the IA had already done most of the work.

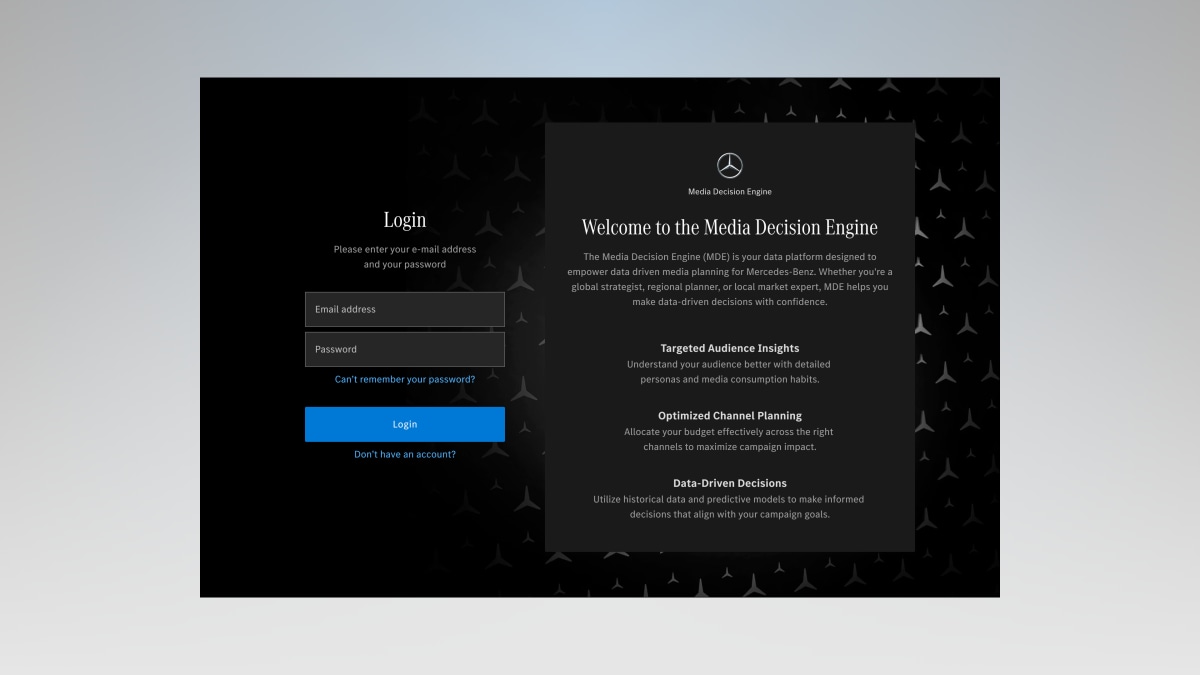

The login screen sets expectations for who the platform is for, before the user has logged in.

Impact

A powerful platform that explains itself

Final designs were delivered to Mercedes-Benz in spring 2025, with implementation underway in the months that followed. The redesigned Media Decision Engine turned a capable but hard-to-use platform into one that worked with its users rather than against them. The platform was clearer in structure and more consistent in behaviour, and required less prior knowledge to navigate.

- A structure that explains itself. 15 discrete tools were regrouped into 3 stages that mirror how planners actually reach a decision, so users no longer have to reconstruct the platform’s logic.

- Consistent behaviour across every tool. Shared interaction and data-visualisation patterns replaced the per-tool inconsistency that had forced users to re-learn the interface each time they switched.

- Self-service for less experienced planners. Live scenarios and a clearer IA carry most of the onboarding, so newer users can find what they need without hand-holding.

- Average time-on-tool

- 3x

- Planners had been bouncing off slow loading and confusing navigation; now they stay and work in the tool.

- Daily users

- 40–50

- Adoption grew from a 2–3 person daily core to 40–50 people using the platform every day.

- Fewer settings to enter per scenario

- ⁓80%

- Each setting is entered once globally, rather than per tool — and visualisations update live as scenarios change.

The overhaul got a great reception from test markets. Mercedes are rolling it out across Europe, all very positive.

James Hutchinson

Data Operations Manager, OMD

Lessons learned

Redesigning inside a working product

- Running sprints in series rather than parallel multiplies their value. Running information architecture, global scenario settings, and onboarding sprints in parallel would have produced three competent solutions. Running each one after the other produced one coherent platform, because each sprint inherited the structural decisions of the last. The cost was time; the gain was a cohesive redesign.

- Insights from the original creators are invaluable. The OMD interviews were the most valuable research input we had. They did not validate decisions; they exposed the constraints that we would otherwise have rediscovered the hard way.